Chapter 7: When Everything Changes, Bet on What Doesn’t

AI leaders predict a radical disruption of the world economy. Here's why the ancient laws of human nature are the safest investment you can make right now.

“I very frequently get the question: ‘What’s going to change in the next ten years?’ I almost never get the question: ‘What’s not going to change in the next ten years?’ And I submit to you that that second question is actually the more important of the two.”

— Jeff Bezos

Something unusual is happening among the people building the most powerful AI systems on earth: they’re agreeing with each other.

Dario Amodei, the CEO of Anthropic, wrote in early 2026 that AI systems could change the world fundamentally within two years.1 In ten years, he said, all bets are off.2 He warned that half of all entry-level white-collar jobs could be eliminated within one to five years. He called the coming disruption “unusually painful” — and he picked those words carefully.3

Previous technological shocks only ever affected a narrow slice of human abilities, leaving room for people to adapt and expand into new roles. AI will hit the full range, and it will hit fast.

Mustafa Suleyman, the CEO of Microsoft AI and co-founder of DeepMind, is building what he calls “humanist superintelligence” — AI designed explicitly to serve humanity.4 He puts it plainly: “If you’re not a little bit afraid at this moment, then you’re not paying attention.”5 He predicts that roles in HR, marketing, project management, and legal will be among the first reshaped.

He also believes AI could reduce the cost of energy by a hundred times within fifteen years, making nearly everything radically cheaper.6

Demis Hassabis, the Nobel Prize-winning CEO of Google DeepMind, places a fifty percent probability on achieving artificial general intelligence by 2030.7 He describes what’s coming as ten times bigger and ten times faster than the Industrial Revolution.8 He envisions an era of “radical abundance”: AI solving the root problems of disease, energy, and material scarcity.9

He also admits we may need an entirely new political philosophy to navigate it. Democracy, he says, might not be sufficient. Our current model of trading labor for money may not survive.10

Three of the most powerful people in AI. Three different companies. All saying the same thing: what’s coming will reshape everything.

So here’s the question this chapter tries to answer: if everything is about to change, what doesn’t?

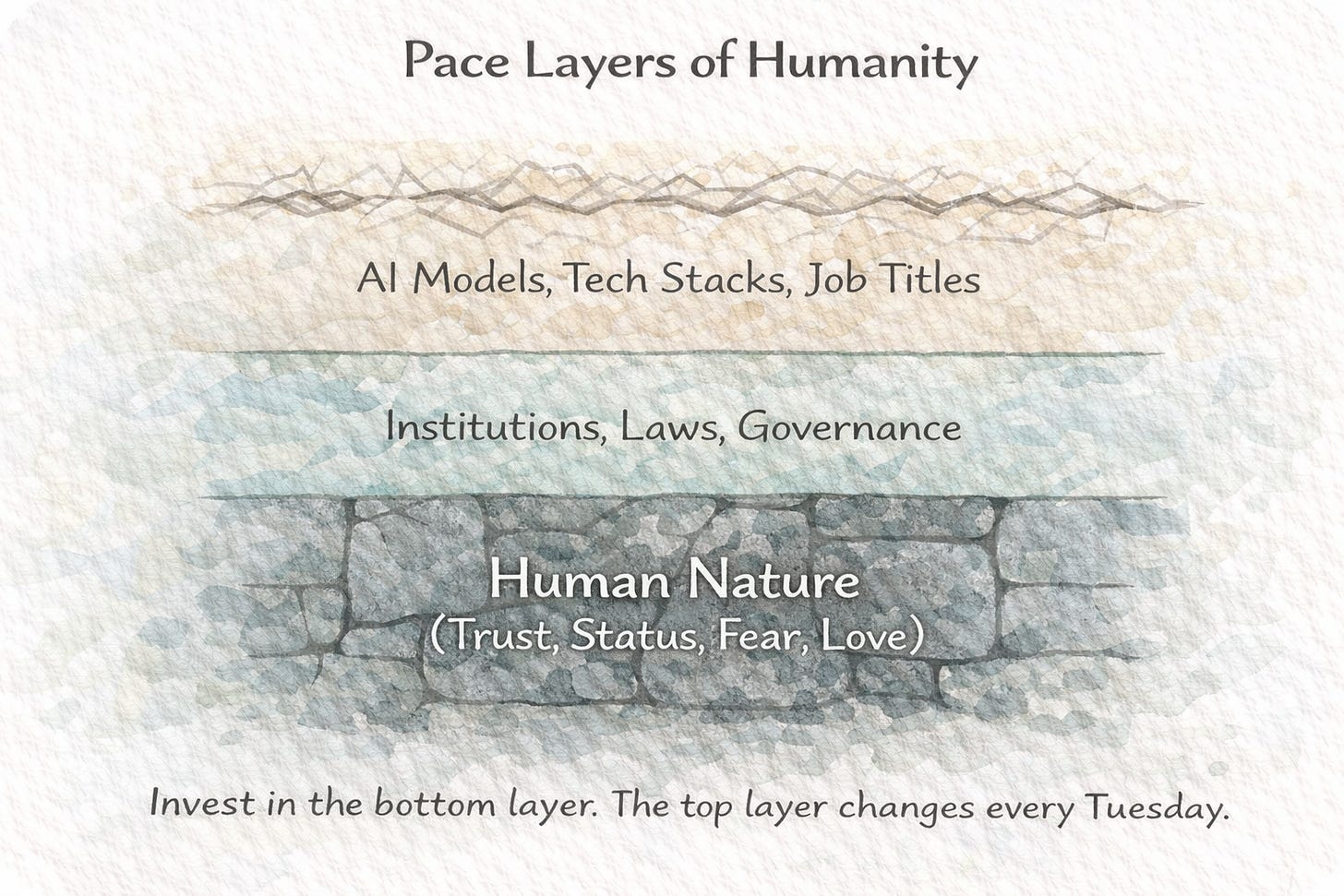

Betting on the Bedrock

The Lindy Effect offers a useful filter. For nonperishable things, i.e. ideas, technologies, books, cultural practices, the longer something has survived, the longer it’s likely to continue surviving.

A book that’s been in print for a hundred years has a better chance of being in print in another hundred years than a book published last Tuesday. A restaurant that’s been open for fifty years will probably outlast the trendy spot that opened last month.11

Nassim Taleb, who did more than anyone to popularize this idea, puts it directly: time is the ultimate filter. Everything fragile eventually breaks. What remains has demonstrated something profound about its relationship with reality.

Most people apply this to technologies, institutions, and practices. Fair enough. I think the more powerful application, especially right now, is to apply it to human needs. The deep, stubborn, ancient parts of who we are that don’t update with new software.

Jeff Bezos built Amazon using a version of this logic. He didn’t ask what would change in the next ten years. He asked what wouldn’t. Customers will always want lower prices, faster delivery, more selection. These desires won’t reverse. He bet everything on the constants. And because those things never shifted, every ounce of energy he poured into them kept compounding.12

That's the lens I want to apply here.

What human needs have been constant for millennia and will remain constant regardless of what emerges from any AI laboratory?

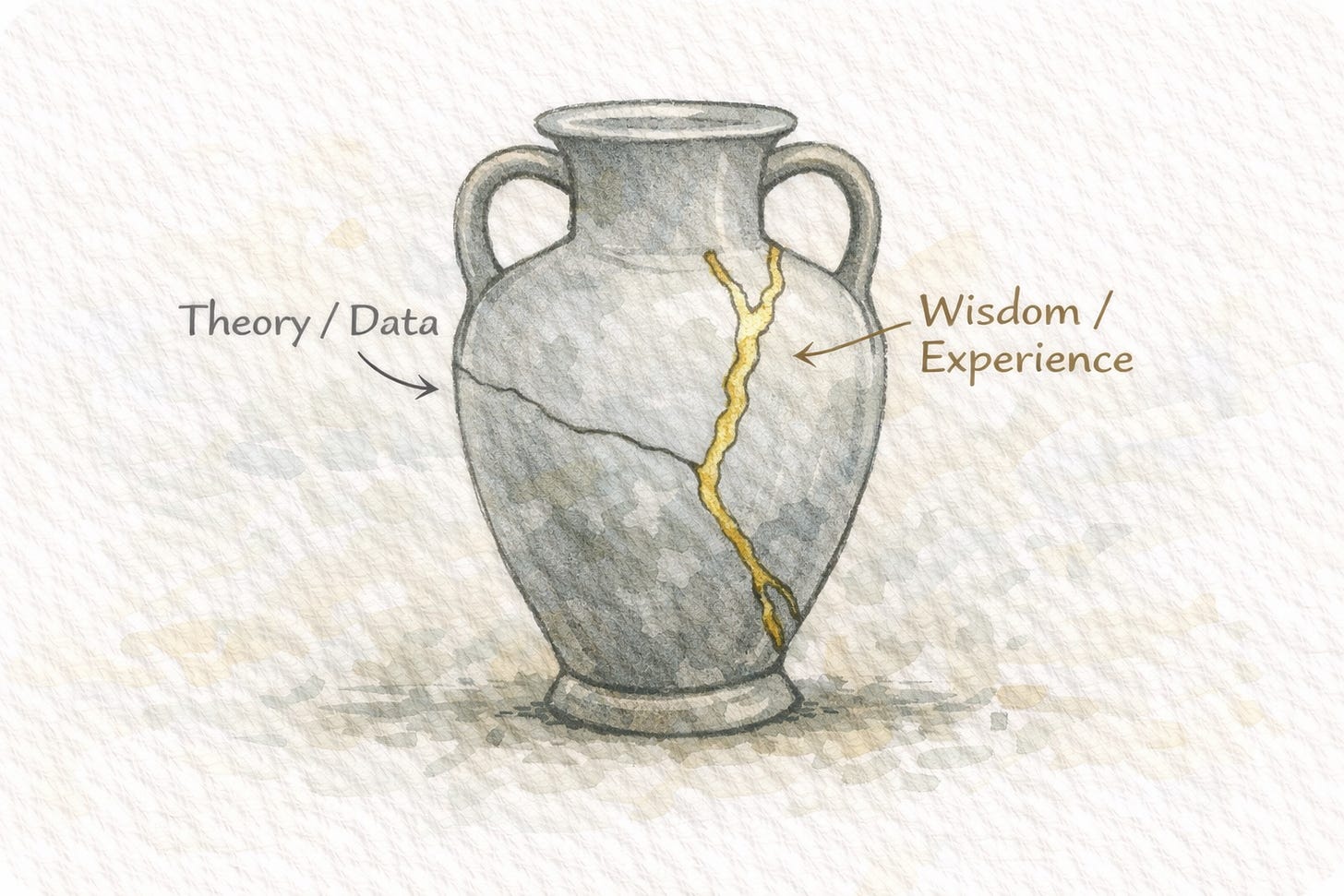

The Body Remembers What the Mind Forgets

During my years as a football player, I sustained the usual collection of injuries.

Knee problems. Ankle sprains. Adductor strains that flared up during cold winter training camps. Each one sidelined me from full practice for weeks, and sometimes even months. While my teammates practiced full drills and prepared for championship games, I’d be on the side of the pitch doing light rehabilitation work that barely qualified as exercise.

Those stretches of forced stillness taught me something I couldn’t have learned any other way: the difference between the discomfort you choose and the discomfort that chooses you.

During one of those winter training camps, in the middle of an intense endurance session, I felt a pressing pain in my chest that forced me to stop. I finished the session and decided to get it properly checked. I went to a cardiac clinic, and after several days of tests, they told me plainly: if I continued playing football, I risked ending my career on the pitch. They weren’t talking about retirement.

I was seventeen years old.

To put things into perspective, I wasn’t just some kid with big dreams. I played for the best clubs in the country. I’d been identified as talented from an early age (the kind of kid coaches pulled aside after matches). I won best player of the tournament in almost every championship I competed in. I’d even had the honor of representing the national team.

And now a doctor was telling me it was over.

Everything I had dreamed about since I was a kid — all the work, the discipline, the sacrifice my parents and I had poured into this for ten years — was shattered in a single sentence.

I walked out of that clinic and broke down in tears alongside my mother, who had been with me through every step. What followed were days and weeks of weighing, analyzing, going back and forth, until I finally made the decision to end my career. The day I walked into the locker room to break the news to my coach and my teammates was one of the hardest days of my life.

I was three months from graduating high school. I had no plan B. Apart from foreign languages and sports, I had no strong skills in any academic discipline. My entire identity had been organized around a future that no longer existed.

The Greeks had a phrase for this kind of education: pathei mathos — learning through suffering.

The knowledge that doesn’t live in your head. It lives in your body. In the chest that once betrayed you. In the memory of your mother’s face in the parking lot of a hospital. In the specific weight of walking into a room full of teammates and saying the words out loud.

AI can process every cardiology journal ever published. It can generate rehabilitation protocols more sophisticated than any sports physician could design alone.

What it cannot do is stand in that hallway at seventeen, feel the ground disappear, and carry that experience forward into a life it didn't choose.

Suleyman himself has made this distinction: AI creates the perception of experience, a seeming narrative of consciousness. The actual experience — the suffering, the fear, the grief — requires a living body.13

Embodied experience has been a source of wisdom for as long as humans have existed. The body is the original teacher. It always has been. It always will be.

What Doesn’t Change

This pivotal moment in my life taught me that when the ground disappears beneath you, you stop looking for what’s new. You start looking for what’s solid.

After my football career ended, when I was trying to figure out what would matter in the next chapter of my life, I started compiling a list of those solids. I’ve updated it over the years, and it’s remarkable how little it changes.

Trust requires skin in the game.

Every society in recorded history has organized itself around trust. Trust between people who trade with each other. Trust between parents and children. Trust between leaders and the led.

The mechanisms for generating trust have changed over the years, but the underlying need has never moved an inch.

AI will make it easier to fake competence, fabricate credentials, and simulate sincerity. Deepfakes are already here. Synthetic voices are indistinguishable from real ones. Within a few years, you may not be able to tell whether the person on the other end of a video call is flesh or pixels.

That’s exactly why trust will become more valuable. When the world drowns in convincing fakes, the ability to demonstrate that you have something real at stake becomes the rarest signal in the marketplace.

A financial advisor who invests her own money in the same funds she recommends. A doctor who would give his own child the same treatment he’s prescribing yours. A leader who eats last. These are ancient trust signals. They predate language. They predate civilization. They will outlast every model, every chatbot, and every algorithm that will ever be built.

Stories move people.

Anthropologists have yet to find a human culture that didn’t tell stories.

Yuval Noah Harari argues that stories are the fundamental technology of human cooperation.14 Currency is a story. Nations are stories. Corporations are stories. Even science communicates through narrative—the story of natural selection, the story of the Big Bang, the story of germ theory replacing miasma theory. We don’t process information as raw input. We process it as plot.

AI can generate more content in an hour than humanity produced in its first ten thousand years. But the stories that actually change minds have always come from someone who lived something and found the language to share it.

A memoir written by someone who walked through hell is categorically different from a memoir generated by a model trained on thousands of other memoirs. The words might look identical on the page. But we can feel the difference. We always have.

There’s a reason we still read Marcus Aurelius.15

He was a Roman emperor writing private notes to himself while camped on a freezing battlefield, trying to hold an empire together. A real person, in a real situation, writing for no audience, is exactly what gives the Meditations their power two thousand years later.

An AI generates content. A human bears witness. We’re about to be flooded with the former, which means we’ll pay a premium for the latter.

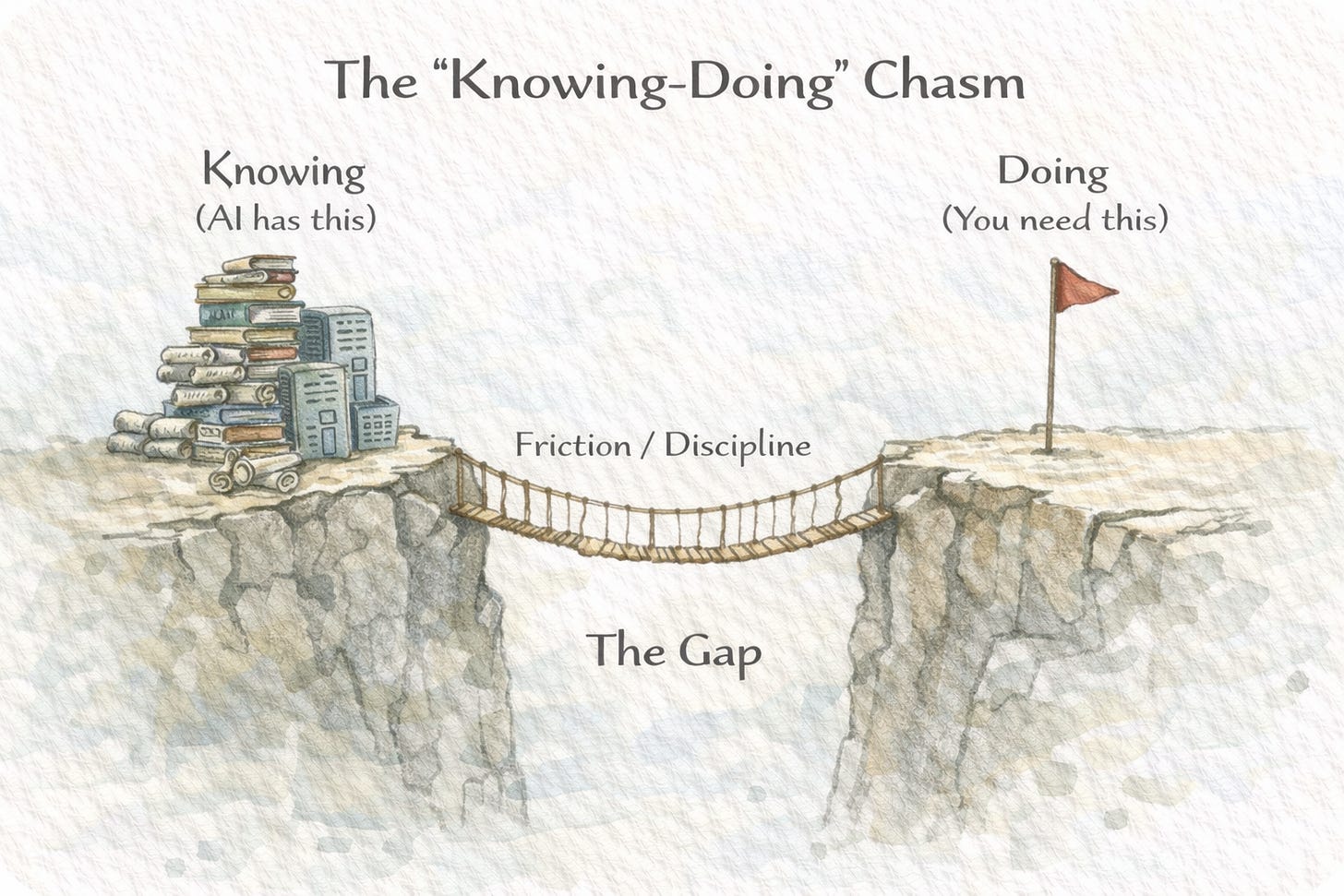

The gap between knowing and doing persists.

Most people already know what they should do. They don’t do it.

They know they should exercise, save money, be patient with their children, read more, sleep more, complain less, and focus on what they can control. Information has never been the bottleneck.

The Greeks called it akrasia — acting against your own better judgment. Socrates talked about it. Paul the Apostle wrote about it. Saint Augustine agonized over it. Every generation rediscovers this same limitation: we are creatures who reliably fail to do what we know is good for us.

AI will make advice more abundant and more personalized. Most people will read it, nod, and continue doing exactly what they were doing before.

The real advantage has never been in knowing what to do. It’s in the capacity to actually do it.

The need to be understood endures.

One of the most persistent human needs is being seen and understood by another conscious being.

A therapist can now be simulated with impressive accuracy. An AI companion can remember everything you’ve ever told it, never lose patience, never be in a bad mood, and always say something empathetic at the right moment. Millions of people will use these tools, and many will benefit from them.

The hunger underneath, the ancient need to sit across from another person who has also suffered, who is also confused, who is also mortal, and to feel that they get it, is older than language. Social mammals have been seeking this kind of recognition for millions of years.

My daughter is one year old. She can’t speak yet. But when she looks at me and I look back, and she sees that I’ve seen her, something settles in her body. She relaxes. She feels safe. No technology will ever replicate what’s happening in that exchange, because the exchange depends on two mortal, fragile beings recognizing each other.

The capacity to truly see another person — to listen without an agenda, to sit with someone in their pain without rushing to fix it — has been rare and precious in every era. It will be rare and precious in every era that follows.

The status game never ends.

Evolutionary psychologists have spent decades studying what drives human behavior beneath the surface explanations we give ourselves. One finding is remarkably consistent: we are status-seeking animals, and no amount of material abundance has ever turned this off.

Hunter-gatherer societies had status hierarchies. Agricultural societies had them. Industrial societies had them. The wealthiest nations in history still have them. Scandinavian countries, with some of the highest standards of living on earth, haven’t escaped status competition. They’ve just redirected it, toward who drives the most sustainable car, who has the most egalitarian household, who vacations most responsibly.

Hassabis talks about “radical abundance”, a world where AI has solved scarcity, where energy is cheap and goods are plentiful and material deprivation is a thing of the past.

That’s a beautiful vision. And even if we get there, we’ll still be competing with each other. We’ll still be jockeying for position. We’ll still care deeply about where we stand relative to the people around us. The currency of the competition will change. The competition itself won’t.

This matters because even in the most optimistic AI scenario, the human skills that have always helped people navigate social complexity, i.e. reading a room, building alliances, earning genuine respect through demonstrated competence, will remain central to a life well lived.

Courage under uncertainty remains essential.

Amodei admits that no one can predict the future with complete confidence. Hassabis says we’re living through the most profound technological shift in human history. Suleyman says fear is healthy and necessary.16

Notice what all three are really saying: the future is radically uncertain, and you need to act anyway.

This is the human condition. It has always been the human condition.

The peasant farmer in medieval Europe didn’t know if the harvest would come. The immigrant arriving in a new country with two suitcases and no contacts didn’t know if the bet would pay off. When I walked out of that cardiac clinic, I had no map. Choosing to study marketing based on a vague hunch that understanding human behavior might matter was a leap made in the dark.

Fourteen years later, I design digital products for a living. The path from a football pitch in Moldova to a product design career in tech was not linear, not planned, and not optimized by any algorithm. It was built one uncertain step at a time, each one requiring a small act of courage that no machine could have performed on my behalf.

Every meaningful human endeavor has been undertaken in conditions of uncertainty. Courage has never meant the absence of fear. It means the willingness to act when the outcome is unknowable.

AI will reduce certain kinds of uncertainty. It will predict weather patterns with better accuracy. It will catch diseases earlier. It will model financial risks more precisely. The deepest uncertainties, though, like ‘Should I leave this career?’, ‘Should I start this company?’, ‘Should I marry this person?’ require the kind of courage only a person with something to lose can summon.

The Oldest Playbook

People will always want to feel understood.

People will always respect those who bear the cost of their own decisions.

People will always be moved by stories told by someone who lived them.

People will always struggle to do what they know they should.

People will always compete for status.

People will always need courage to act under uncertainty.

People will always learn more from their suffering than from their comfort.

None of this requires electricity.

Amodei, Suleyman, and Hassabis are describing a world that’s about to look unrecognizable on the surface. They’re probably right. The tools, the interfaces, the economics, the career paths, the daily rhythms — all of it may shift beyond what we can currently imagine.

Underneath all of that, the old machinery keeps humming. The same needs. The same drives. The same ancient architecture of what it means to be human.

I didn’t plan to become a product designer. I planned to become a footballer. Life had other ideas, and those ideas arrived in the form of a chest pain during a winter training session when I was seventeen.

Everything that came after — every career pivot, every new skill learned from scratch, every uncomfortable leap into unfamiliar territory — was built on the same foundation that has supported people through upheaval for thousands of years: the willingness to keep going when the plan falls apart, the discipline to do the work even when the direction is unclear, and the stubborn belief that showing up matters more than knowing exactly where you’re headed.

The tools will keep changing. They always have. The playbook for a good human life has been remarkably stable for millennia.

Bet on what doesn’t change. It’s the safest bet you’ll ever make.

Dario Amodei, "The Adolescence of Technology," personal blog, January 2026. https://www.darioamodei.com/essay/the-adolescence-of-technology

Dario Amodei on 60 Minutes, CBS News, November 2025. Reported by Fortune: "Anthropic CEO Dario Amodei is 'deeply uncomfortable' with tech leaders determining AI's future," November 17, 2025.

CNBC, "Anthropic CEO Dario Amodei warns AI may cause 'unusually painful' disruption to jobs," January 27, 2026.

Mustafa Suleyman, blog post announcing Microsoft's Humanist Superintelligence initiative, November 2025. Reported by Fortune, November 6, 2025.

Mustafa Suleyman, interview with The National, December 29, 2025: "If you're not a little bit afraid at this moment, then you're not paying attention."

Mustafa Suleyman, AFROTECH Conference 2025. Reported by AfroTech: "Microsoft AI CEO Mustafa Suleyman Says Superintelligence Must Always Work in Service of Humanity."

Demis Hassabis, interview with WIRED, June 2025. He placed a “50% chance” of achieving AGI within 5 to 10 years.

Summary of Hassabis's outlook on AI as "10x bigger and 10x faster than the Industrial Revolution," reported by JoinHorizon.ai, January 4, 2026.

Demis Hassabis, interview with Axios at the AI+ SF Summit, December 5, 2025: “AGI, probably the most transformative moment in human history, is on the horizon.”

Demis Hassabis, TIME100 interview, April 2025: "We might need a new political philosophy."

Nassim Nicholas Taleb, Antifragile: Things That Gain from Disorder (Random House, 2012)

Jeff Bezos, quoted widely from various shareholder letters and interviews.

Mustafa Suleyman, CNBC interview, November 2, 2025: "Only biological beings can be conscious." On AI's inability to experience suffering: "It's really just creating the perception, the seeming narrative of experience."

Yuval Noah Harari, Sapiens: A Brief History of Humankind (Harper, 2015), Chapter 2: “The Tree of Knowledge.”

Marcus Aurelius, Meditations, written c. 170–180 AD. Gregory Hays translation (Modern Library, 2002).

Amodei: "No one can predict the future with complete confidence." From "The Adolescence of Technology," January 2026. Hassabis: "the most profound technological shift in human history." Axios, December 2025. Suleyman: "Fear is healthy and necessary." The National, December 2025.

Thank you for sharing this. Also saw a lot of genuine vulnerability, which is really missing these days.

There’s a lot of change and uncertainty in my life right now, much (if not, all) of it by choice, and your reminder, the way you arranged the words with the encouragement to act with courage, even through suffering, touched a chord. Nothing feels fully clear for me, but this gave me a bit more courage to keep going.

I’m grateful you put it out there, Daniel. Can’t wait to read your next piece.